浏览 1.9k

1、现象:

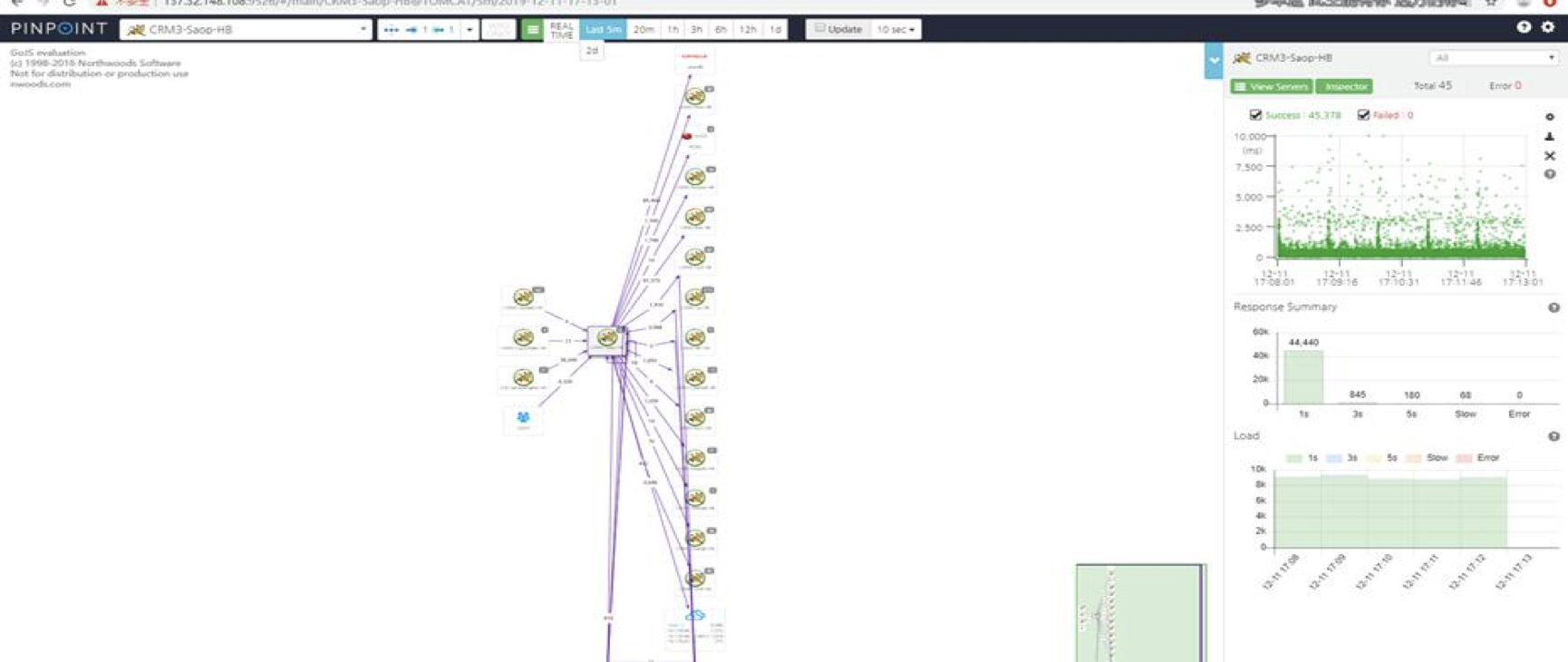

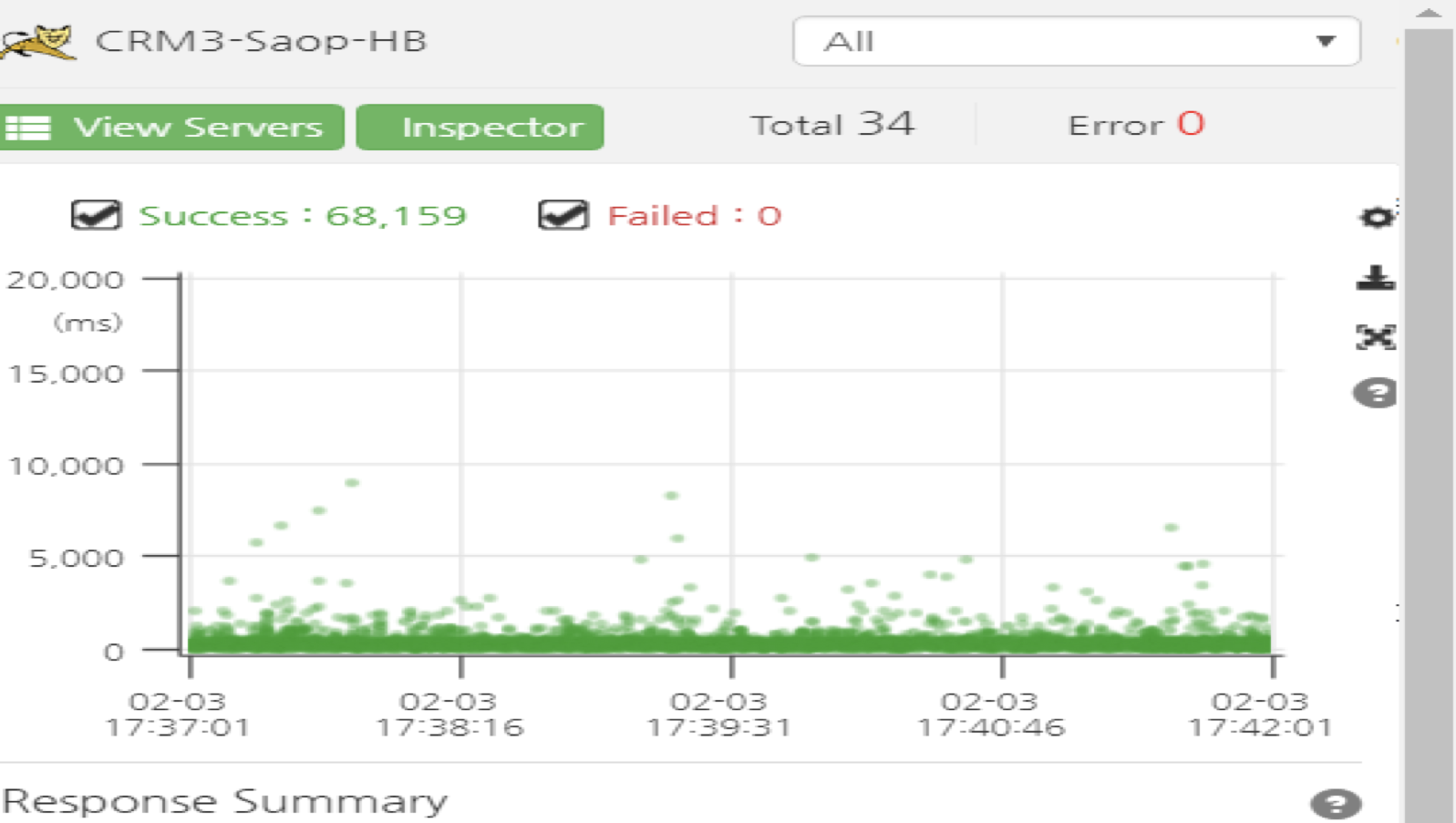

某些服务请求响应时间有规律性波动,每分钟存在波动,在响应图上体现为明显的柱状。

2、分析过程:

部署架构为两层nignx,nginx(接入层)->nginx(路由层),然后是服务层各应用集群:

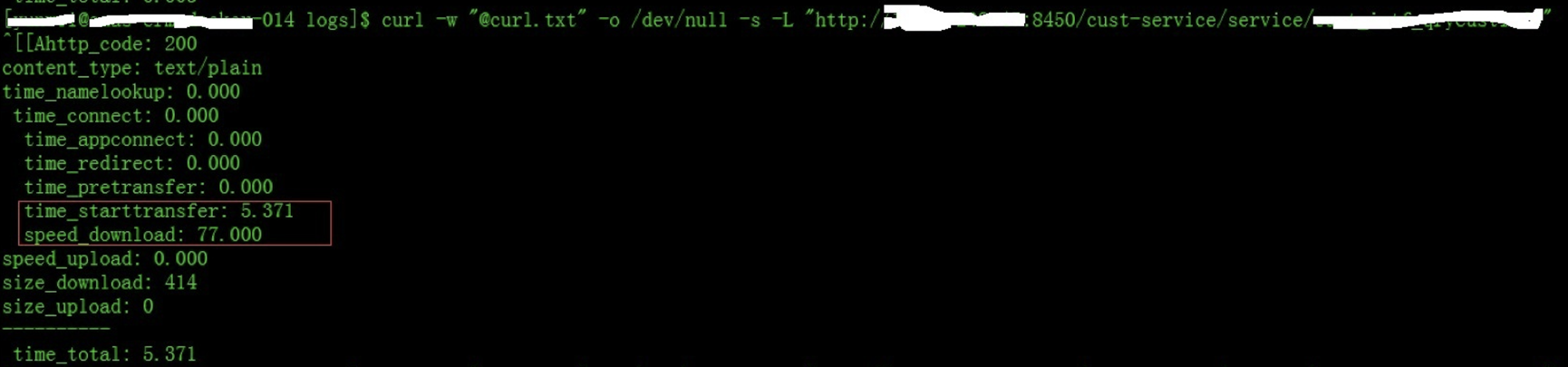

· 1)如下图所示,curl在服务器上调用也存在同样的问题,如下图所示,正常情况下3ms,但波动时>5s,因此调用存在nginx及后端。

· 2)Curl单独调用服务层接口没有问题,因此确定问题出现在nginx。

· 3)绕过接入层nginx,直接调用路由层nginx地址仍然出现,因此定位故障在路由层。

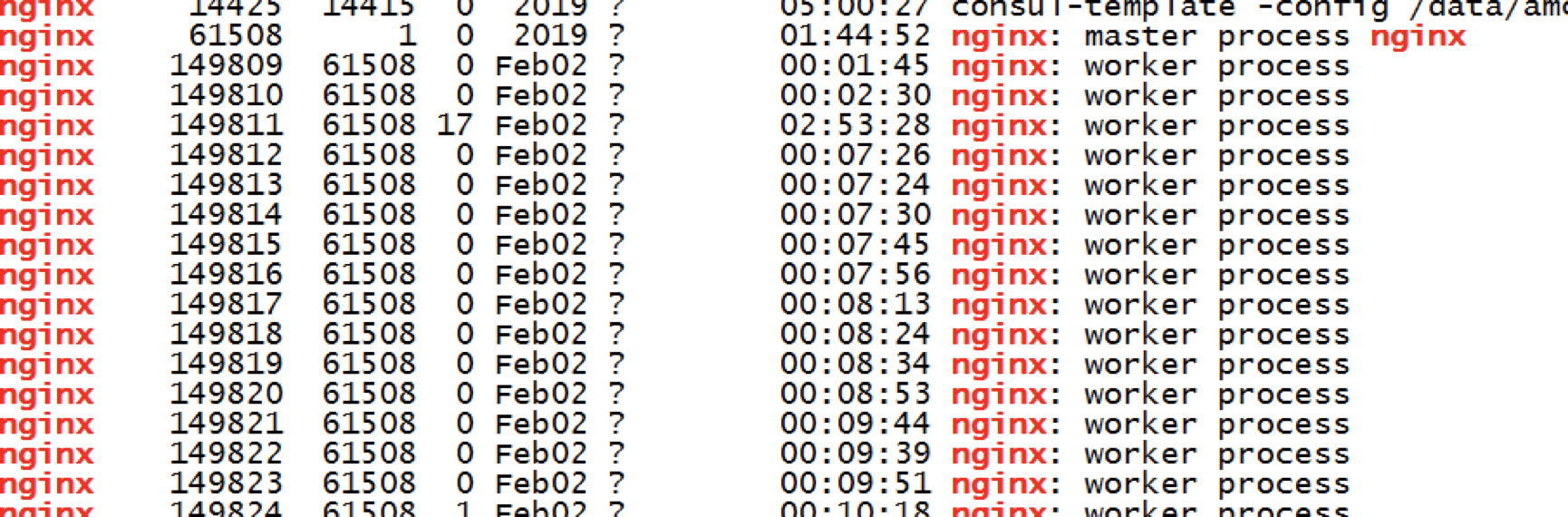

· 4)路由层nginx,会在服务注册时候,定期进行relaod,从而影响性能,但通过检查linux的状态,没有发生过定期规律性的restart/reload。

· 5)发现路由层nginx有每分钟的定时任务。

· 6)关闭监控采集程序后问题解决。

3、根源分析:

PaaS监控组件Prometheus,会定时调用nginx监控模块,采集性能指标,用于性能分析、展现、告警。nginx指标提供模块,采用的是 prometheus-lua,在采集程序访问时候,该字典会被加锁,造成nginx的线程等待。因此在指标量越大,访问量越多,越受影响,PaaS监控组件Prometheus每分钟调用linux的这个监控模块,会阻塞linux的服务,导致响应规律性的波动,并且由于该省访问量大,集群规模大,因此受到的影响更高。通过将prometheus-lua切换为nginx的vts模块问题解决。

Please keep in mind that all metrics stored by this library are kept in a single shared dictionary (lua_shared_dict). While exposing metrics the module has to list all dictionary keys, which has serious performance implications for dictionaries with large number of keys (in this case this means large number of metrics OR metrics with high label cardinality). Listing the keys has to lock the dictionary, which blocks all threads that try to access it (i.e. potentially all nginx worker threads).

There is no elegant solution to this issue (besides keeping metrics in a separate storage system external to nginx), so for latency-critical servers you might want to keep the number of metrics (and distinct metric label values) to a minimum.

按点赞数排序

按时间排序

微信公众号

微信公众号 加入微信群

加入微信群